Why Choose Us For Web Development Services?

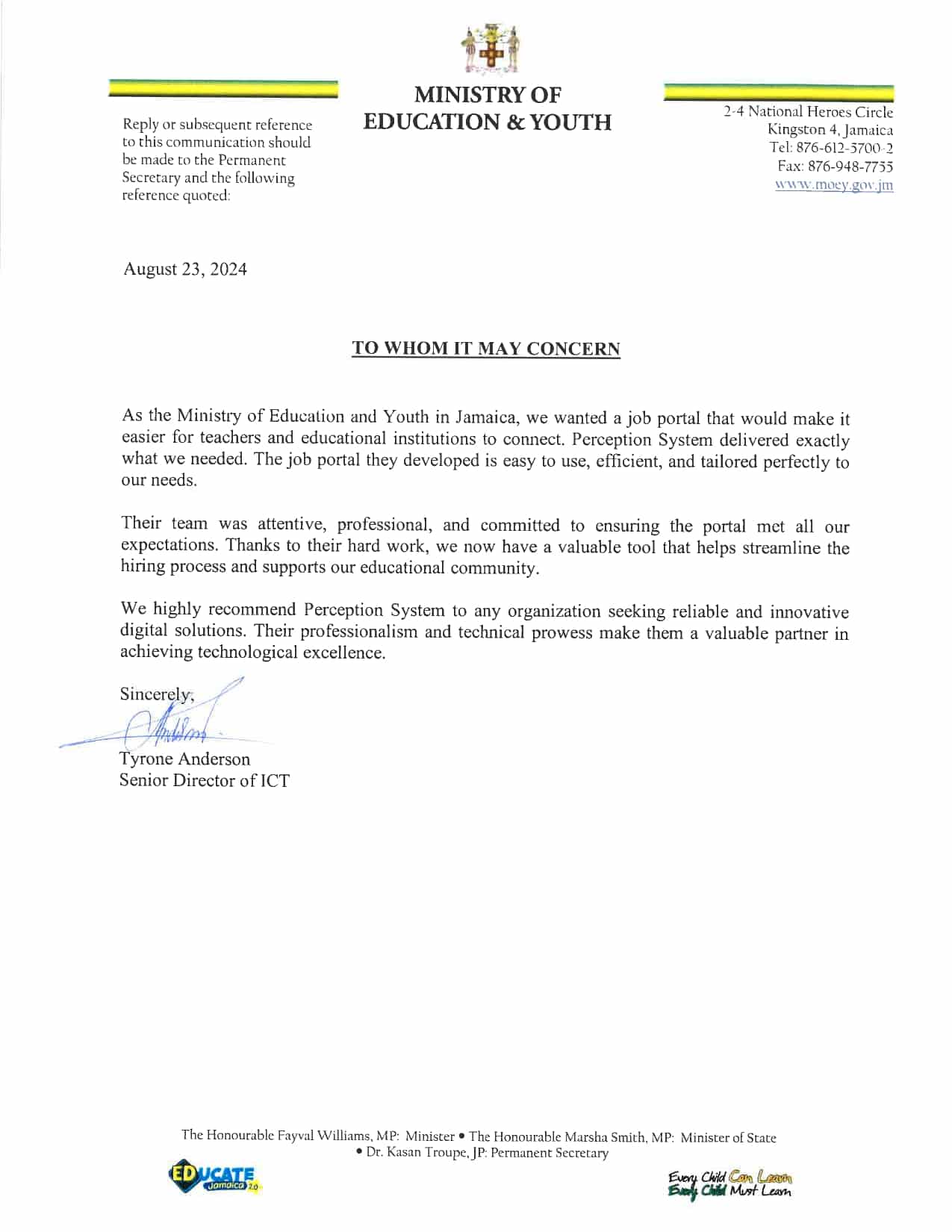

Perception system is a trusted web development company in the USA. We have 20+ years of experience in custom web development services, serving clients across the globe. We have a proven record as a web development service provider in delivering complex web app solutions, from planning to delivery.

Right Consultancy

5000+

Serving clients globally using modern web design and development technologies and frameworks.

Vast Experience

20+

Leverage 20+ years of experience in creating robust web designing and development applications.

Client Satisfaction

95%

Ensuring client retention with successful projects delivery and continuous collaboration.

Web Development

Solutions We Provide

Intranet and Extranet Portals

Web-based Enterprise Solutions

Cloud-based / SAAS Development

Awards

Full-Scale Web

Development

At PerceptionSystem, we provide full-scale web development services to help businesses build a strong online presence. Our team of experienced web developers works with you to create custom web solutions that meet your unique requirements and exceed your expectations. We offer a wide range of web development services, including:

Case Studies

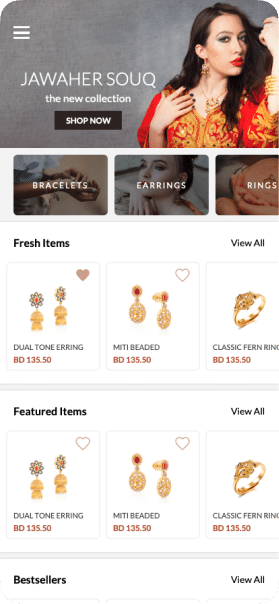

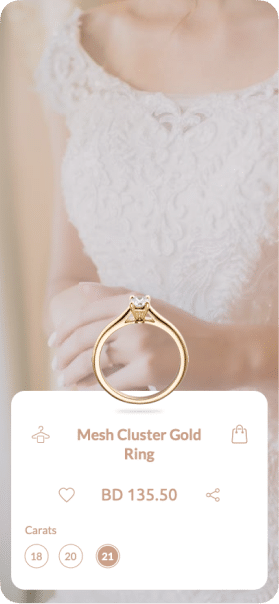

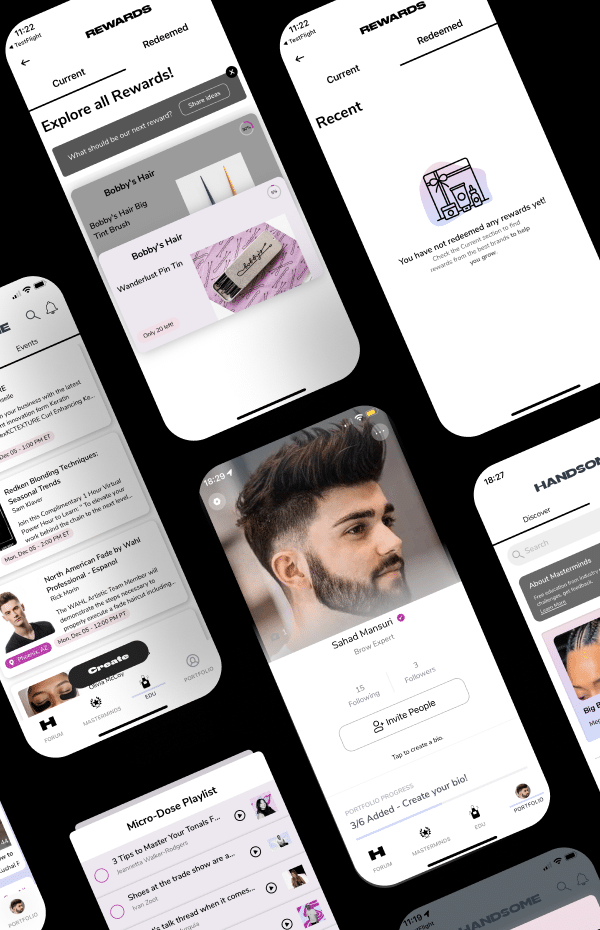

HANDSOME – HANDSOME is the place for hairstylists, colorists, makeup artists, estheticians, and beauty pros to build your industry network, post questions, find answers and apply to jobs.

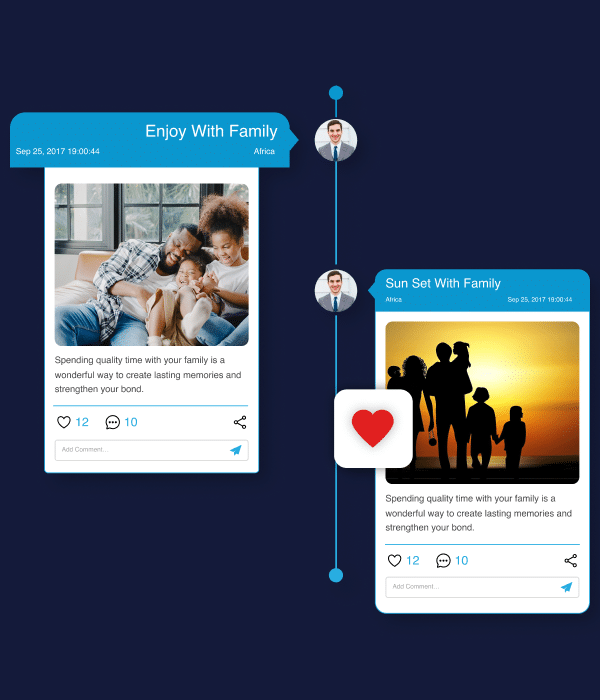

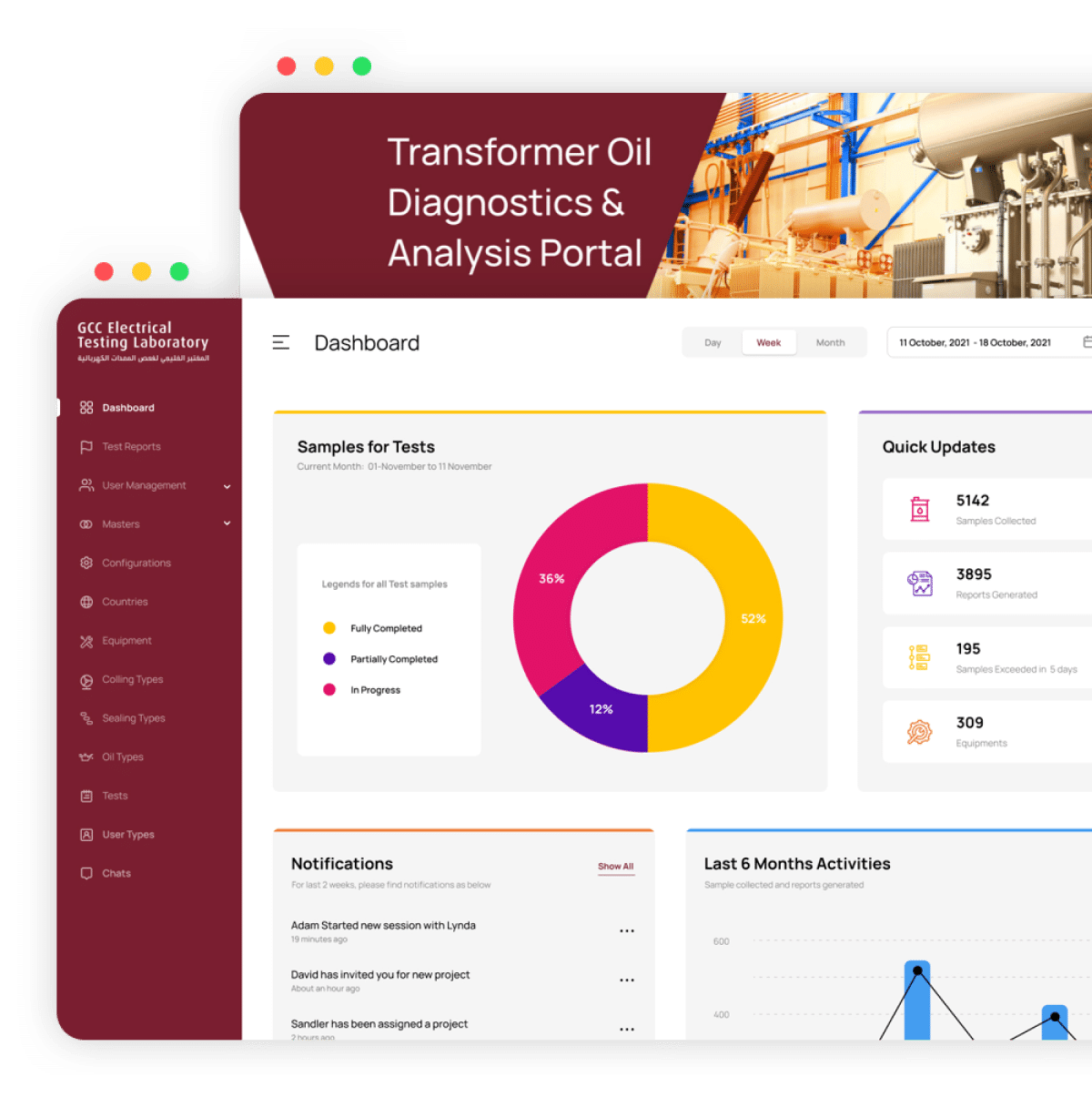

GCC – Managing the routines of a testing lab in the electrical field for transformer oil was never easy and so effective before this solution.

Our Expertise

We have experienced web engineers that reflect our expertise in every aspect of custom web development. We continuously evolve our skills, to help our clients reach their expectations.

Web Portal Development

We create a wide range of web infrastructure that matches your specific business needs. Be it your customers, vendors or partners, we offer comprehensive web development services including UI/UX design, development, analytics, integrations, QA and ongoing support for a smooth web application experience.

Enterprise Portal Development

Online Travel Portal Development

Real Estate Portal Development

Backend Development

We have over a couple of decades of experience in providing satisfactory backend development services. Trust our web development services agency that boasts tasks of completing complex web applications, with sophisticated backend architecture. Our solutions are extensible, on-premise, and compatible with end-user needs. Being a custom website development company, we have expertise in core backend technologies as mentioned below.

Web Application Development

Our web development services company can build robust web apps as our core competency. We already have a time-honored approach for startups to complex enterprise web applications as we pivot resources to deliver customizable and high-quality robust website applications, efficiently and cost-effectively.

Custom Web App Development Services

Web App Integration Service

Work-Flow & Project Management

Business Web Applications

Frontend Development

Leverage the power of latest frontend technologies to create an inspiring user interface for your web applications. Keeping in mind the best UX practices, our professional web development services create a top-notch experience for your users. We have a team of talented and creative frontend web developers who can design an engaging UI for you.

ReactJS, Vue JS, JavaScript

HTML5, CSS3

LESS, SAAS

Graphics Design, UI/UX

CMS Website Development

Take complete control of your website’s content as we help create custom website development that enable instant updates to your content, executing content-driven business strategy, and keeping your content up-to-date.

WordPress, Joomla, Drupal CMS Development

Magento, Woo-Commerce, Shopify E-Commerce Solution

Reengineering, Custom Module & Plugin Development

Optimization, Up-Gradations & Migrations

Web Portal Development

We create a wide range of web infrastructure that matches your specific business needs. Be it your customers, vendors or partners, we offer comprehensive web development services including UI/UX design, development, analytics, integrations, QA and ongoing support for a smooth web application experience.

Enterprise Portal Development

Online Travel Portal Development

Real Estate Portal Development

Backend Development

We have over a couple of decades of experience in providing satisfactory backend development services. Trust our web development services agency that boasts tasks of completing complex web applications, with sophisticated backend architecture. Our solutions are extensible, on-premise, and compatible with end-user needs. Being a custom website development company, we have expertise in core backend technologies as mentioned below.

Web Application Development

Our web development services company can build robust web apps as our core competency. We already have a time-honored approach for startups to complex enterprise web applications as we pivot resources to deliver customizable and high-quality robust website applications, efficiently and cost-effectively.

Custom Web App Development Services

Web App Integration Service

Work-Flow & Project Management

Business Web Applications

Frontend Development

Leverage the power of latest frontend technologies to create an inspiring user interface for your web applications. Keeping in mind the best UX practices, our professional web development services create a top-notch experience for your users. We have a team of talented and creative frontend web developers who can design an engaging UI for you.

ReactJS, Vue JS, JavaScript

HTML5, CSS3

LESS, SAAS

Graphics Design, UI/UX

CMS Website Development

Take complete control of your website’s content as we help create custom website development that enable instant updates to your content, executing content-driven business strategy, and keeping your content up-to-date.

WordPress, Joomla, Drupal CMS Development

Magento, Woo-Commerce, Shopify E-Commerce Solution

Reengineering, Custom Module & Plugin Development

Optimization, Up-Gradations & Migrations

Web Development Technologies

& Frameworks We Use

Frontend

-

React JS

-

Vue js

-

Javascript

-

HTML5

-

CSS

-

Less

-

Sass

Backend

-

Node JS

-

PHP

-

Python

Frameworks

-

Laravel

-

Express

-

Django

Average Cost

of Different

Web Solutions

Static Website development cost

A static website is a basic website that contains fixed content and does not require a database or other dynamic features. The average cost of a static website can range from $500 to $5,000, depending on the size and complexity of the site. Static websites are a good option for small businesses or individuals who need a simple online presence.

CMS-Based Website development cost

A CMS-based website allows you to manage and update your website’s content using a content management system (CMS) such as WordPress, Drupal, or Joomla. The average cost of a CMS-based website can range from $2,000 to $10,000, depending on the complexity of the site and the customization required.

E-commerce Website development cost

An e-commerce website is an online store that allows customers to purchase products or services directly from the website. The average cost of an e-commerce website can range from $5,000 to $50,000, depending on the size of the product catalog, the complexity of the checkout process, and the level of customization required.

About

Perception System

We have been a custom web development company in the business since 2001. Our clients include Harvard and Georgetown University amongst the other new promising startups in the US like HANDSOME and Lugelo who have raised USD 5 Million Seed funding.

Contact usFeatured Web

Development Expertise

We have developed multidimensional solutions with enterprise-level functionalities.

Skilled &

Certified Team

Our team of web developers includes certified professionals with years of experience.

Scalability &

Performance

Web development solutions provided by us, ensure high performance and scalability.

Cost-Effective

Web Development

We aim to help you reach your goals with a high-quality and cost-effective solution.